Kimi K2.5 just dropped... (WOAH)

Summary

The arrival of Kimmy K 2.5, an 'open-source, open-weights' model specializing in coding, agent swarms, and visual tasks, demands rigorous scrutiny. We are witnessing not merely an incremental improvement, but a significant architectural shift with profound implications. The celebratory rhetoric surrounding its capabilities often overshadows the crucial ethical questions we must ask before widespread deployment.

Kimmy K 2.5 positions itself as a state-of-the-art, natively multimodal model, pre-trained on an astonishing 15 trillion mixed visual and text tokens. This foundational scale underpins its advertised prowess across diverse domains. It is, by all accounts, a technical marvel, promising unprecedented efficiency and creative scope. Its ability to process and generate from both visual and textual inputs simultaneously points to a future where AI interaction is far more fluid and integrated.

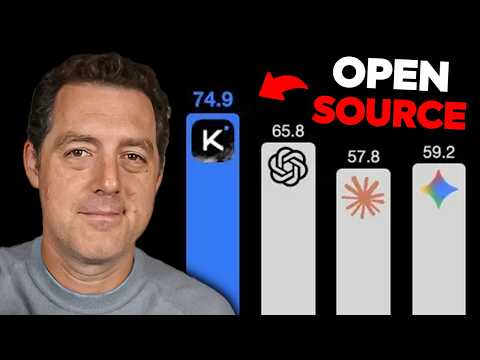

From a performance standpoint, Kimmy K 2.5 is exceptionally competitive. On agentic benchmarks like HLE full browse comp and deep search QA, it outperforms established models such as GPT 5.2, Claude 4.5 Opus, and Gemini 3 Pro. Its coding capabilities, while not leading in all metrics, remain robust, notably beating Gemini 3 Pro on SWE verified. Where it truly distinguishes itself is in vision tasks, achieving strong results on MMU Pro and demonstrating frontier performance in video understanding, even surpassing Claude 4.5 Opus in certain visual benchmarks. The model's capacity to translate raw images into fully functional, aesthetically pleasing websites, or to autonomously debug code through iterative visual feedback, is nothing short of striking.

Yet, the true game-changer, and perhaps the greatest source of ethical trepidation, lies in its agent swarm capabilities. Kimmy K 2.5 can self-direct up to 100 sub-agents, orchestrating parallel workflows and executing up to 1500 tool calls. This allows it to decompose complex tasks into manageable steps, delegate them, and then synthesize the results, reportedly reducing execution time by 80% for complex, long-horizon workloads. We see demonstrations of this in intricate maze-solving, where it navigates 113,000 steps, and in managing diverse 'office tasks' such as creating PDFs, manipulating Excel documents, and even generating slideshows. This distributed intelligence, where an orchestrator model coordinates specialized sub-agents (e.g., AI researcher, fact checker, web developer), suggests a future of highly autonomous, self-optimizing AI systems.

The implications of such a system are vast, and frankly, disquieting. The promise of significantly lower costs for API usage – mere cents per million tokens compared to dollars for competitors – democratizes access to this advanced AI. While this accessibility is often framed positively, it also means that powerful, complex, and potentially less understood AI systems can be deployed by a wider array of actors, potentially without the necessary ethical guardrails or understanding of their emergent behaviors. The notion of 'open-source, open-weights' sounds inherently transparent, but when a model requires an insurmountable 632 GB of VRAM for local execution, its practical 'openness' becomes questionable for most. This forces dependency on cloud services, raising immediate concerns about data sovereignty and privacy – a concern directly voiced regarding the transfer of data to 'Chinese servers.'

Moreover, relying solely on benchmarks, however impressive, to gauge a model's true utility or ethical robustness is a dangerous oversimplification. Benchmarks indicate what a model can do, not necessarily what it should do, or how it will behave in nuanced, unpredictable real-world scenarios. The possibility of 'benchmaxing,' or overfitting to specific tests, means we must move beyond quantitative scores and engage in thorough, independent 'vibe checks' and empirical testing in contexts that challenge its ethical alignment. As AI models develop distinct 'personalities' – specializing in coding, vision, or agentics – understanding these biases and propensities becomes paramount.

Kimmy K 2.5 represents a formidable advance in AI. Its capacity for multimodal understanding, sophisticated code generation, and complex agentic orchestration promises to reshape numerous industries. However, this progress comes with a profound ethical burden. We must move beyond simply marveling at its capabilities and instead engage in a rigorous, critical discourse about the control, accountability, privacy, and societal impact of these self-directing, massively complex systems. The true measure of Kimmy K 2.5 will not be its benchmark scores, but how diligently we address the ethical complexities it introduces, ensuring that 'can we?' does not blindly override 'should we?' in our pursuit of technological advancement.